Trust and safety

AI-generated output requires review. Visual Studio Code includes multiple mechanisms to keep you in control of what changes reach your codebase. This article explains the control mechanisms, AI limitations, and security considerations you should be aware of.

Stay in control

Agents can read files, edit code, run terminal commands, and call external services. VS Code provides several mechanisms to ensure you remain in charge of what happens in your workspace:

-

Review edits before applying. Agents show file changes in a diff view. You can review each change, accept or reject individual edits, and modify the code before saving. Learn more about reviewing code edits.

-

Use checkpoints to revert. Agent sessions create checkpoints as work progresses. If the agent takes a wrong turn, return to a previous checkpoint and try a different approach. Learn more about checkpoints.

-

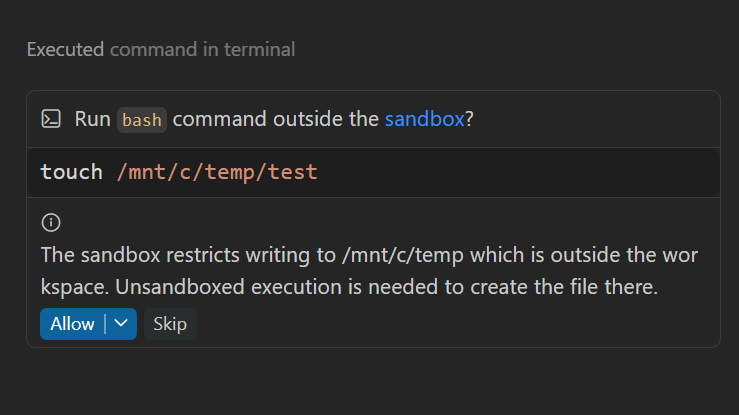

Approve tool calls. VS Code asks for your approval before running terminal commands or using tools with side effects. You control which tools can run automatically and which require confirmation. Use the Chat: Manage Tool Approval command to centrally manage approvals for all tools.

-

Choose a permission level. Control how much autonomy the agent has: Default Approvals requires confirmation for sensitive tools, Bypass Approvals auto-approves all tool calls, and Autopilot (Preview) also auto-responds to questions and continues autonomously. For higher autonomy levels, pair with agent sandboxing or a container.

-

Trust boundaries. VS Code enforces security boundaries around file access, URL access, agent sandboxing, and MCP server interactions. Learn more about AI security.

Always review AI-generated code before committing. Verify that it handles edge cases, follows your project's conventions, and doesn't introduce security issues.

Agent sandboxing

Agent sandboxing is currently in preview and might further evolve.

Agent sandboxing uses operating system-level isolation to restrict what agents can access on your machine. Instead of relying solely on approval prompts before each action, sandboxing defines strict boundaries for file system and network access that are enforced by the OS itself.

VS Code currently applies sandboxing to terminal commands (runInTerminal agent tool) that are executed during an agent session. Learn how to configure agent sandboxing.

When sandboxing is enabled, VS Code automatically approves commands and tool calls without a confirmation prompt because they already run in a controlled environment.

Why sandboxing matters

Approval-based security requires you to confirm each terminal command or tool call before it runs. While this provides control, it has practical limits:

-

Approval fatigue. Repeatedly approving commands can cause you to pay less attention to what you're approving, especially during long agent sessions.

-

Parsing limitations. Auto-approval rules use best-effort command parsing, which has known limitations. Shell aliases, quote concatenation, and complex shell syntax can bypass the rules and slip through undetected.

-

Prompt injection. Malicious content in files, tool outputs, or web pages can attempt to trick the agent into running harmful commands. If you approve without careful review, it might result in unintended actions and security risks.

-

Unintended actions on external services. Even without malicious intent, an agent with network access can perform actions on your behalf that are difficult to reverse. For example, the agent might provision cloud resources, modify infrastructure settings, push code to a remote repository, or call an API that triggers a deployment or a financial transaction. Network isolation ensures the agent can only reach domains you explicitly permit, reducing the risk of unintended side effects on external services.

Sandboxing addresses these challenges by enforcing boundaries at the OS level. The sandbox prevents auto-approved commands from accessing files or network resources outside the permitted scope. If additional permissions are required, VS Code prompts you to run the command outside the sandbox.

How sandboxing works

Sandboxing enforces two types of isolation: file system access and network access. Both are applied at the OS level and can't be bypassed by the commands running inside the sandbox.

File system isolation

Without file system isolation, a compromised command could modify files anywhere on your machine, for example, injecting malicious code into your shell configuration (~/.bashrc, ~/.zshrc) or reading SSH keys from ~/.ssh/. File system isolation prevents this by restricting access to explicitly permitted paths.

-

Default behavior. Read access is allowed for workspace folders and the sandbox runtime temp folder. Reads from your home directory (

$HOME) are denied by default to protect sensitive files such as SSH keys, shell configuration, and credentials. Write access is limited to the current working directory and its subdirectories. When a request is made that requires additional permissions, VS Code prompts you to allow running the command outside the sandbox.

-

Per-command read paths. Before a command runs, VS Code parses it and grants read access to the specific paths the command needs. This covers common developer workflows such as

git,node,npm,dotnet, Java, and Rust. For example, running anodecommand automatically allows reads from the Node version manager directory, and running agitcommand allows reads from~/.gitconfig. -

Configurable rules. You can grant read or write access to additional paths, or deny read or write access to specific paths. Deny rules always take precedence over allow rules.

-

Inherited restrictions. All child processes spawned by a sandboxed command inherit the same file system boundaries. This means tools like

npm,pip, or build scripts are also restricted.

Network isolation

Without network isolation, a compromised command could exfiltrate sensitive data or could perform unintended actions on external services. Network isolation prevents this by blocking all outbound connections by default.

When

chat.agent.sandbox.enabled

This setting is managed at the organization level. Contact your administrator to change it. is set to on, all outbound network access is blocked unless you explicitly allow specific domains. If you want file system isolation but need unrestricted network access, set

chat.agent.sandbox.enabled

This setting is managed at the organization level. Contact your administrator to change it. to allowNetwork. In this mode, commands can reach external services freely while file system restrictions still apply.

VS Code provides network domain filtering that applies to both agent tools (fetch tool, integrated browser) and sandboxed terminal commands. Enable chat.agent.networkFilter This setting is managed at the organization level. Contact your administrator to change it. to activate network filtering. Use chat.agent.allowedNetworkDomains This setting is managed at the organization level. Contact your administrator to change it. and chat.agent.deniedNetworkDomains This setting is managed at the organization level. Contact your administrator to change it. to control which domains the agent can access. Learn how to configure network access.

-

Domain allowlist. You can explicitly permit access to specific domains.

CautionThe agent can perform actions on allowed domains on your behalf, not just read data. For example, allowing

api.github.commeans the agent could create pull requests or modify repository settings. Allowing a cloud service API domain could lead to cloud resource modifications. Only configure this setting if absolutely required. This configuration is specified in a setting and applies to all agent tools and sandboxed commands, not only the current task. -

Inherited restrictions. All child processes inherit the same network restrictions, so scripts or tools that spawn subprocesses cannot bypass the network rules.

OS-level enforcement

Agent sandboxing relies on OS-level security primitives to enforce file system and network restrictions. Because the enforcement happens at the kernel level, sandboxed processes and all of their child processes cannot bypass these boundaries, even if a command is crafted to attempt it.

| Platform | Technology | Prerequisites |

|---|---|---|

| macOS | Apple's sandboxing framework ("Seatbelt"), built into the operating system. Enforces fine-grained file system and network restrictions at the kernel level. | None. Works out of the box. |

| Linux and WSL2 | bubblewrap for file system isolation and socat for network proxying. |

Install required packages: sudo apt-get install bubblewrap socat (Debian and Ubuntu) or sudo dnf install bubblewrap socat (Fedora). |

WSL version 1 is not supported because bubblewrap requires Linux kernel features (user namespaces) that are only available in WSL2.

Agent sandboxing support on Windows currently uses WSL2 as the underlying platform.

What sandboxing does not cover

Agent sandboxing applies only to shell subprocesses (terminal commands). It does not cover built-in file tools. The agent's read, edit, and write tools use VS Code's permission system directly, rather than running through the sandbox.

The chat.agent.networkFilter This setting is managed at the organization level. Contact your administrator to change it. setting provides network domain filtering for agent tools like the fetch tool and integrated browser, independently of sandboxing. When both sandboxing and network filtering are enabled, network rules apply to all agent tools and terminal commands.

Use the review flow and sensitive file protection to control these operations.

For full environment isolation, pair sandboxing with a dev container. Dev containers provide a complete boundary around the entire development environment, including all tools, file access, and network access.

Agent sandboxing is currently in preview and continues to evolve to cover more tools and scenarios.

AI limitations to watch for

Incorrect output. Models can generate code that looks correct but contains bugs, uses deprecated APIs, or doesn't handle edge cases. Always test AI-generated code, especially for logic that affects security, data integrity, or critical flows.

Prompt injection. Malicious content in files, tool outputs, or web pages can attempt to redirect the agent's behavior. This is why VS Code includes tool approval gates and trust boundaries. Learn more about AI security.

Treat AI-generated output as a first draft: useful as a starting point, but always requiring your review and judgment. For more on how models work, including nondeterminism, knowledge boundaries, and context limits, see Language models.